REVIEW: Galois Theory, by David Cox

Galois Theory, David Cox (Wiley-Interscience, 2004).

It usually felt like the job of my teachers was to crush my desire to learn things about the world. For example I still remember the math class early in high school where a teacher derived for us the formula that allows you to find the roots of any equation that’s quadratic in a single variable. I was impressed, so naturally I asked the obvious followup: “what about cubic equations?”

My teacher smiled, he was prepared for this one, in fact, yes, there was a cubic formula, he copied it from a sheet of paper onto the blackboard, and we all marveled at its ungainliness. That just got me more excited, and I raised my hand again: “what about quartic equations? Is there a formula for those too?” My teacher frowned, stammered, “uh, yeah, I’m sure there is.” But I was just picking up steam: “what about quintic equations? Or higher order ones? Is there a formula for all possible finite degree polynomials? What if there isn’t?” The teacher glared and snapped: “that’s a stupid question, who cares.” Everybody laughed. I think I wound up with a C+ or a B- in that class.

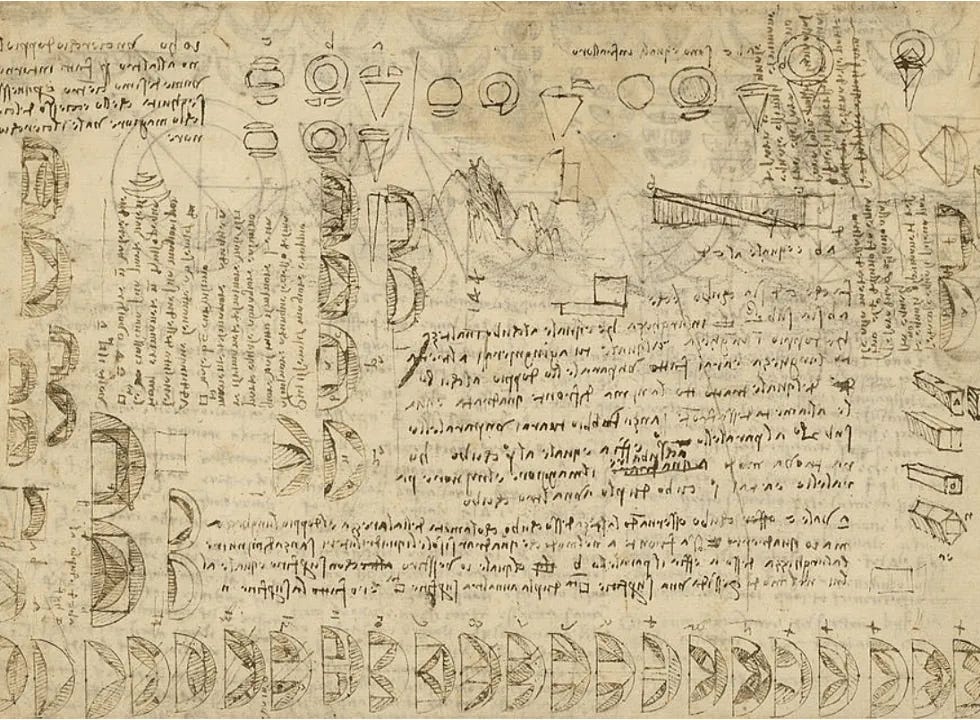

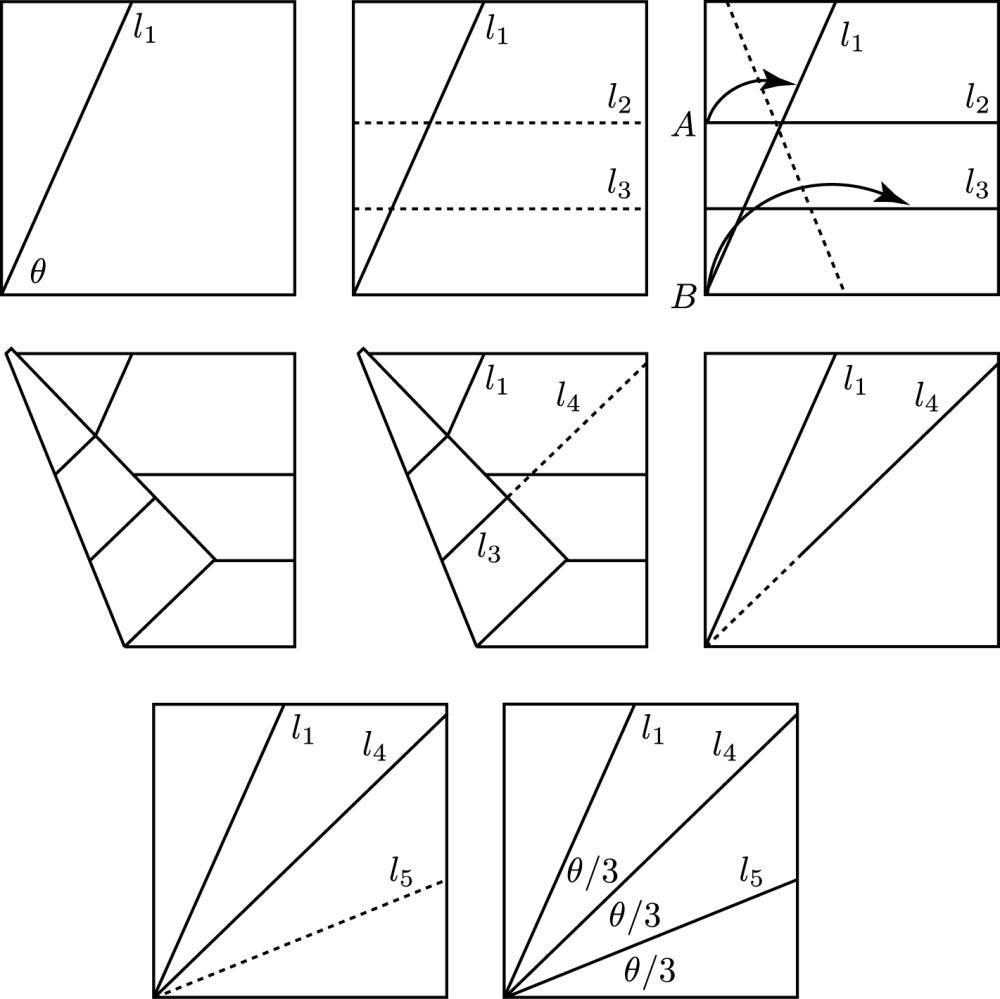

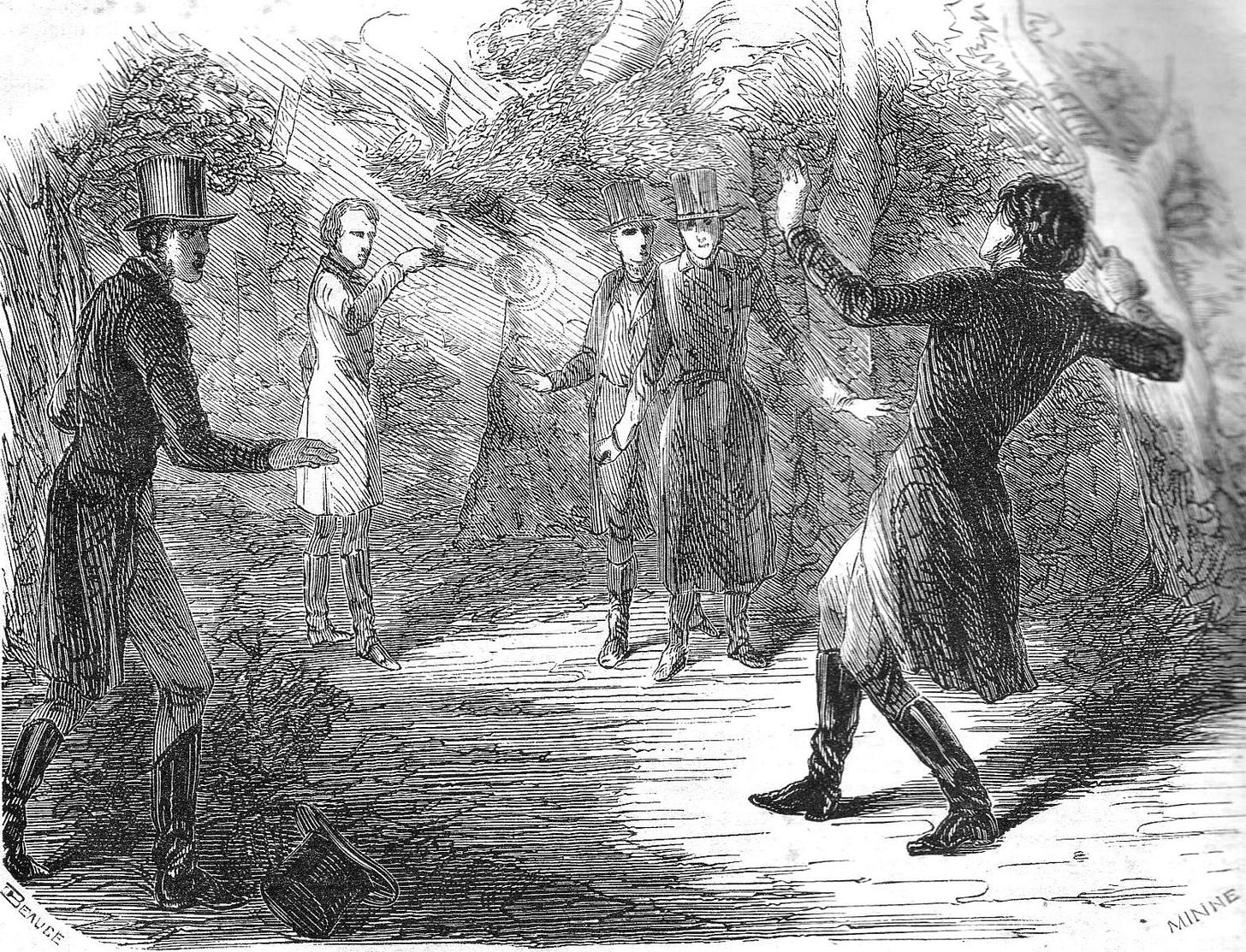

Little did I know that the question had been conclusively settled by a different young man who’d had difficulty in his math classes. Évariste Galois (1811-1832) had a short and unimpressive life punctuated by conflict with teachers and peers. In his 20th and final year he was expelled from university and then killed in a duel. A couple nights before he was left bleeding out on the pavement, however, he poured out his mathematical ideas in a letter to his friend Auguste Chevalier. The ideas in that letter created an entirely new branch of mathematics and settled several age-old questions, including the one I had posed to my teacher, but also including such ancient classics as the impossibility of trisecting an angle or squaring a circle with straightedge and compass.

Reading higher mathematics textbooks is frequently unpleasant due to their extreme concision. These books are like some extremely dehydrated and concentrated spice mixture, or a blob of ultradense neutron star matter. Sometimes a single sentence in them, often breezily introduced with a phrase like: “Thus it obviously follows that…”, can require hours or days of intensive thought to fully comprehend. I once charitably assumed that they were all like this because the authors were all people much smarter than me, for whom it really was obvious.1 Since then I’ve learned that this generally isn’t so, the state of advanced mathematics textbook writing is the result of a hellish aesthetic preference for compressing ideas down to their driest and most compressed fundamentals, combined with a bit of old-fashioned one-upmanship to see who can produce the scariest and most hostile text.

So a lot of credit goes to David Cox for resisting this temptation and writing a textbook that is designed to be read. He does this in a number of different ways, but perhaps the most shocking and iconoclastic is his decision to present the subject in the context of its historical development. Mathematical expositors have a tendency towards the opposite: they prefer to present a body of theory in its finished form, like a perfect, polished gemstone. Usually the final form of a theory is extremely abstract, which makes it powerful and can lay bare its connections to other, seemingly unrelated branches of mathematics. But while this final, “pure” version of the theory might be the best one to have in your head once you already understand it, it can also be the most difficult one to digest and learn. Cox breaks from convention, and instead teaches you several different versions of the theory, an escalating ladder of abstraction, full of the twists and turns and blind alleys and false starts that accompanied its historic development.

The quadratic formula isn’t very hard to figure out for yourself — the Egyptians, Babylonians, Greeks, Indians, and Chinese all discovered it independently and fairly early in the history of their civilizations. The cubic formula is much harder, and was only discovered in a single civilization, that of Renaissance Italy, though it was probably discovered multiple times by competing scholars, each of whom took it to his death bed as a sort of “trade secret”. The quartic formula is harder still, and was discovered by one man: Gerolamo Cardano, who used it to triumph over his rivals in a series of public mathematical contests that functioned a bit like rap battles2 (yes, really).

So the hunt was on. Who would be the first to find a quintic formula — one that allowed you to solve equations like:

The answer is that nobody would, because it turns out such a formula is impossible. I think most mathematicians find this fact deeply shocking on an intuitive level, because 5 seems like such a funny number for a trend to stop at! The “sensible” answers when you ask how many instances of a mathematical object exist are: 0 (the thing you’re imagining is impossible or paradoxical), 1 (the thing you’re imagining actually exists), and infinity (the thing you’re imagining is underspecified, so there are an infinite set of variations on it).3 But this one works until you get to 5, and then stops working forever? What's going on here?

At first blush, Galois theory has nothing at all to do with this question. Galois theory is related to the study of symmetry, in an especially abstract sense. But think about it some more, and a connection emerges. You may remember from school that quadratic equations have two answers, and that neither one is the “best” one. That means the quadratic formula needs to somehow manage to be one formula giving you two different answers, a trick it manages with that “plus or minus” thing.

If there were a formula for fifth-order equations, it would have to give you five answers, but beyond that, because none of them is “best”, it would need to be totally symmetric under any procedure that caused the various answers to swap places with each other. The quadratic formula has this property: you can “flip” it into a mirror-world where the two answers it gives you are still the two answers it gives you, they’ve just swapped places. But for any number of answers greater than four, the set of all possible symmetries of those answers is complicated enough that no formula written with standard algebraic symbols could respect it.

But we can apply this trick to all kinds of things beyond solutions of algebraic equations. Think of it as a generalized version of Buridan’s ass — if every solution to your problem must have some symmetry, that can tell us a tremendous amount about the form the solution must take, or about whether it even exists. This idea shows up all over physics, where it’s commonly known as “Curie’s principle”. It’s also a powerful heuristic in every kind of engineering and in computer science. Sadly I haven’t yet heard of it being convincingly applied to politics or strategy or war (outside of things like pure game theory).

The entire second half of Cox’s book consists of taking this powerful body of theory laboriously achieved in the first half, and using it to crush one age-old mathematical quandary after another. There’s a pure joy to this, like a toddler who’s learned to flip light switches on and off, and now runs around trying every switch in the house; or a high-schooler who’s learned a new argumentative technique or rhetorical trick and deploys it at every turn like she’s the first person who’s ever thought of it.

There’s no shame in this. Cox has given us a shiny mathematical power-tool, and now he stands there, grinning, as we use it to deconstruct every object in our vicinity. Consider the impossibility of trisecting an arbitrary angle with straightedge and compass. The Greeks struggled for centuries to prove this, but despite Herculean intellectual efforts were never able to seal the deal. Then: “BZZZZZRRRRAAAWWW”, Galois theory slices through the problem in seconds. It’s obvious why you can’t trisect an angle that way4 (and, fascinatingly, also obvious why you can do it if you’re allowed to make origami folds). Some of the “applied” topics are a little more abstruse, like cyclotomic polynomials and Abel’s theorem on the lemniscate, but in every case it slices, it dices, and questions that had withstood mathematical attack for centuries are reduced to rubble.

It’s fun. Maybe a bit too fun. I opened this review with one terrible intellectual habit school taught me, and I’ll close with an even worse one that I didn’t fully unlearn until I was in my 20s. I have a pet theory that one reason so many child prodigies never amount to anything is that when you’re very smart, most things come to you easily, and consequently you never learn the mental and emotional tools necessary for grappling with problems that aren’t easy. I was not a child prodigy, but my educational surroundings weren’t exalted either, so I don’t think I encountered a single mathematical idea that I couldn’t instantly grasp until I reached college.

All of this had catastrophic consequences for my intellectual development. A lifetime of school being too easy caused me to subconsciously internalize a conviction that learning was effortless if done correctly, and that needing to struggle or practice was prima facie evidence that one had chosen the wrong subject. A friend of mine who’s a professional athlete once told me that a similar pathology is common among the most preternaturally gifted child athletes. Everything is easy, so they never develop habits of practice, stick-to-itiveness, mental fortitude, or grit. Then one day, it’s no longer easy, falling back on natural ability is no longer enough, and they’re unable to progress any further.

What makes this sickness especially insidious is that it naturally gets tangled together with pride. The smart or sporty kid relishes the fact that things come easily to him, derives feelings of satisfaction and worth from the fact that unlike these other mortals he doesn’t need to practice or study. When one day that changes and coasting is demonstrably no longer good enough, to begin practicing or studying isn’t just difficult because it means developing a new habit at a time of great stress, it’s difficult because it’s an attack on his very identity. Much easier to turn away and reject the activity itself as dumb or pointless or “not what I’m good at”, or to tell a consoling story about how “I would have succeeded if I’d tried”, and then go find something else at which he can succeed effortlessly. The longer this lasts, the harder it is to break out of the cycle, because to do so now additionally means owning up to all the things you could’ve been good at if you hadn’t had your head up your ass.

Something like this pattern had a hold over the young Évariste Galois. You can see its outlines in the remembrances of his teachers who universally praised his brilliance while bemoaning his laziness and prickliness. It’s even more apparent in his decision to walk into his university entrance exams with zero preparation, with the consequence that he attended a far inferior school to the one he should have gone to.

Like Galois I didn’t try in school and blew off my examinations, but unlike him my reckoning was postponed and I got into the university I wanted to anyway. How odd that it turned out to be this topic, Galois theory, and this book in particular, by David Cox, that provided me with the first faltering steps out of the epistemic abyss I’d created for myself. Unlike Galois, I wasn’t smart enough to get Galois theory immediately and intuitively. But I could see the beauty in it, see that there was something powerful and terrible and wonderful in those equations, something that I wanted but couldn’t have.

So I did something most uncharacteristic and applied myself to it, spent long hours in the library trying to figure it out. I finally grasped it a few months after my 20th birthday, almost exactly the age that Galois was when he refused an offer of last rites and died with a bullet in his guts, defiant to the end. Like mathematics, our lives are framed by fearful symmetries. But unlike in the Platonic realm, down here we have both a blessing and a curse: any pattern can end, any symmetry can be broken.

I once had a professor who frequently asserted in class that things were obvious when they were extremely non-obvious to me, but in his case I think he really was a genius and he genuinely didn’t understand why something had to be explained further. I turned this situation to my advantage by answering a question on this final exam with: “it’s obvious”, and got nearly full credit.

Cardano’s rapper-cred is bolstered by the numerous allegations of sexual impropriety and heresy that dogged him, and by finally being surpassed by a guy named “Ferrari”. He made his biggest splash, however, via the casus irreducibilis — a genre of cubic polynomials that has entirely real roots, but where one or more of those roots can only be reached via imaginary numbers. This exploded the uneasy compromise whereby Renaissance mathematicians had made free use of imaginary numbers while simultaneously denying their ontological reality.

Arguably category theory has made mathematicians more receptive to “2” as a reasonable number of something to have: the thing and its categorical dual.

I once stumbled across a work of quack mathematics at a public library that purported to “prove” trisectability with straightedge and compass, and in my fury at this dishonoring of Galois’ achievement I seized the book and reshelved it in the fiction section.

There's a story about Laplace, the great French mathematician. Much of his work was focused on "Celestial Mechanics", which is the mathematics of planetary orbits. At the end of his life, he decided to write a book summarizing all his discoveries. Unfortunately, he found that there were some results that he had proved many years ago, but now he had forgotten the proof, and couldn't reconstruct it. Whenever this happened, he dealt with the problem by writing, "It is easy to see that . . ."

"What immortal hand or eye,

could frame thy fearful symmetry?"

For years my favorite piece of data (taped to my office door) had those words scribbled on the bottom*. So I was never the smartest in my class, not even close. But I do wonder if (worry that) we don't support, challenge, encourage, our best and brightest kids enough. Or maybe that is best left to the family? (I was going to write that bright kids are a precious resource, but that just sounds insulting to everyone.)

*Far-IR spectroscopy of semiconductors, a symmetry in the electron and hole wavefunctions allowed us to designate the exciton absorptions we were observing... details not important.